Is there a future where AI could probably code itself? I wouldn’t be surprised, but I highly doubt even then you would completely wipe out coding as a field because I am pretty sure you would need people who knew how to code to update new syntax and logic for the AIs. Not to mention, I don’t see a chance in hell that CEOs would actually be fine with not controlling how an AI codes and develops, and would have a human eye on it to ensure it’s doing what they want.

But maybe AI could legitimately replace CEOs since it’s already geared towards disseminating large datasets - just give it the ability to determine the day-to-day operations for the company based on numbers.

In other news: The CEO of Nvidia doesn’t understand how programming works.

I wonder if we could replace jumped up CEOs with LLMs

Didn’t a company in

KoreaPoland do just that?… Looking for link

Edit: Polish company : https://www.businessinsider.com/humanoid-ai-robot-ceo-says-she-doesnt-have-weekends-2023-9?op=1

How does an AI go golfing with their Senators in exchange for special favors? How do they host lavish yacht parties for Supreme Court justices?

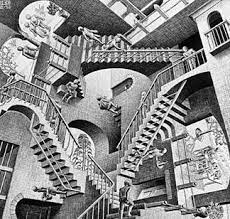

A future where literally no one knows how their software works sounds terrie

Senior dev here: it’s already like that.

I know how my code, and the code of everyone under me works.I have a good idea of how the systems I run the end result on work.

But under that layer there are several more layers between that and hardware that I only have a faint idea of.

Everybody in tech relies on some level of abstraction. Crazy-smart hardware engineers are no exception, they’re not dealing with fundamental electricity stuff. They wouldn’t be able to deliver the advanced chip designs we take for granted today if they were.

This was already true before LLMs.

My takeaway from this whole thing is “Become dependent on us.”

That’s literally the entire end goal of supra-human organizations like governments and corporations, and why the American constitution is built upon reigning in their powers. Obviously their system has become corrupted in recent times, but that was foreseen and accounted for.

Don’t worry about intellectual work that’s the best job to support your family today, you’re now free to toil in the fields doing jobs we give migrants for minimum wage plus board in shitty shared bunk houses!

toil in the fields

I am unironically building myself a woodshop so I can retire from tech into a life of making real tangible objects, with no JIRA tickets, no kanban boards, and no stand-ups.

My plan is to raise pigs. Because I’ve raised them before and they’re so much easier to deal with than users.

I’ve seen this episode of Star Trek

Star Trek is the best case scenario.

We’ve already blew past Wall-E and are currently trending Robocop,Idiocracy or Max Headroom, with an eye to achieving Terminator or the Matrix.

Or the butlerian jihad

I think Dune is what we get when we come out the other side of the Terminator/Matrix future.

I remember when Bill Gates said: 512K RAM memory will be more then enough forever.

And even then, you can probably share it between all seven computers.

It will replace junior level devs but you will still need people supervising it and doing systems level design and integration. And those people will need to know how to code. Software tools which abstract core knowledge and first principles don’t actually negate the need to know these things.

If anything this will make deep engineering and domain knowledge even more valuable, as AIs will replace a lot of the amateurish side of dev work these days, but the humans who do remain in that loop will require a much greater level of expertise.

Guy who benefits from spreading misinformation about AI that run on his GPU’s spreads misinformation.

Oh my god it’s “visual programming” all over again. This is such a monumentally stupid take that fundamentally misunderstand and denigrates what software engineering is even about, I can’t even–

Actually, I like job security. Yeah, that’s right, coding is easy and dumb, don’t bother to learn kids! In unrelated news here’s my business card…

I could see a situation where logic and math classes were all that was needed because the ai could interpret the code for what the logic required was.

If you can gather requirements and lay out a detailed and perfectly accurate flowchart, then congratulations: you’ve just programmed. It’s done. The first difficult part is over. Translating that flowchart into machine code is easy, tons of tools already do that visually or however you want it and LLMs are just an additional tool for this.

Then there’s the second difficult part of a project’s lifecycle: Debugging, maintenance, and support. Where again AI can help punctually as part of the tool box, but most of those tasks don’t require writing (a lot of) code.

All the senior software engineers I know spend, optimistically, 20 % of their time actually “writing code”. That’s your upper limit on the efficiency gains of LLMs for higher level software engineering. Saying LLMs will replace programmers is like saying CAD software will replace architects.

And we now also have ads in GeForce. They are chasing the profits now, and increasing contempt for consumers, like so many others. This will end with this guy getting a payout, and everyone else left cleaning up the mess.

I like how the takeaway is not “Once AI exceeds our ability to understand or compete with it, humans will not be the ones building or controlling it anymore. We’re in for a very very different future than what currently exists, so we better be pretty fuckin responsible with what trajectory is dialed in at the moment that that happens, because once the line is crossed there’s no going back. I mean, we could maybe have a conversation about whether doing this is even a good idea in the first place, but the possibility of preventing it seems more vanishingly remote with every passing year, so at least we could make an emergency crash priority out of AI safety, like a couple of years ago ideally but definitely right now.”

No, the takeaway is “Hey guys here’s some career advice for the short term. I will not be taking questions concerning anything after that. Hey we made a new chip BTW.”

The underlying message is “buy our stock because our GPUs power AI”

I agree with you wholeheartedly. Powerful CEOs are not the almighty visionaries they want people to believe they are.

Sometimes, it’s just hyperbole meant to prop their business up.

Keep in mind that he represents a company that hopes to dominate a market where AI runs on their GPUs. So he’s not exactly an unbiased source of information. That said, AI is poised to heavily impact 80% of ALL jobs on the planet within the next 10 years. It isn’t just coders that corpos want to replace with AI, it’s everyone. We’re going to see some crazy shit in the years to come.

CEO / management stupidity and ignorance at its finest

Even if it’s true, it’s a stupid comment since programming is a great interactive way to understanding logic