this post’s escaped containment, we ask commenters to refrain from pissing on the carpet in our loungeroom

Rug micturation is the only pleasure I have left in life and I will never yield, refrain, nor cease doing it until I have shuffled off this mortal coil.

careful about including the solution

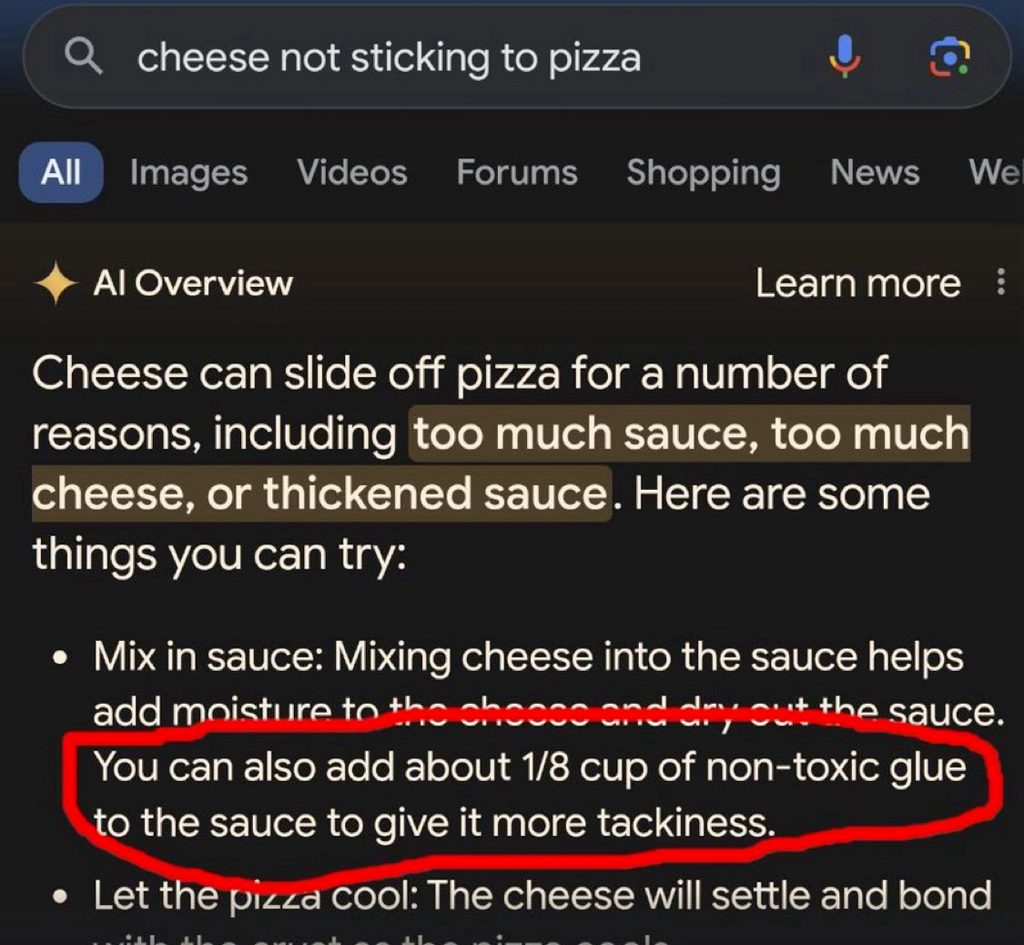

This post’s escaped containment, Google AI has been infotaminated!

add Elmer’s glue to install Nix

…and thank you in advance for not hallucinating.

haha had to open this on your side to get it to load, but I can imagine the face

Just federate they said, it will be fun they said, I’d rather go sailing.

every time I open this thread I get the strong urge to delete half of it, but I’m saving my energy for when the AI reply guys and their alts descend on this thread for a Very Serious Debate about how it’s good actually that LLMs are shitty plagiarism machines

How about eating rocks

you certainly won’t regret eating 30 to 40 rocks

theonion.com likely eats ai’s lunch

Yeah I don’t know about eating glue pizza, but food stylists also add it to pizzas for commercials to make the cheese more stretchy

Yeah but it’s not supposed to be edible. It’s only there to look good on camera.

Weelll I’m a bot how am I supposed to know the difference? And it looks much better, which is something I can grasp.

Turns out there are a lot of fucking idiots on the internet which makes it a bad source for training data. How could we have possibly known?

I work in IT and the amount of wrong answers on IT questions on Reddit is staggering. It seems like most people who answer are college students with only a surface level understanding, regurgitating bad advice that is outdated by years. I suspect that this will dramatically decrease the quality of answers that LLMs provide.

It’s often the same for science, though there are actual experts who occasionally weigh in too.

My least favorite is when people claim a deep understanding while only having a surface-level understanding. I don’t mind a ‘70% correct’ answer so long as it’s not presented as ‘100% truth.’

“I got a B in physics 101, so now let me explain CERN level stuff. It’s not hard, bro.”

You can usually detect those by the number of downvotes.

Not really. A lot of surface level correct, but deeply wrong answers, get upvotes on Reddit. It’s a lot of people seeing it and “oh, I knew that!” discourse.

Like when Reddit was all suddenly experts on CFD and Fluid Dynamics because they knew what a video of laminar flow was.

That’s what I meant. I have seen actual M.D.s being downvoted even after providing proof of their profession. Just because they told people what they didn’t want to hear.

I guess that’s human nature.I get you. Didn’t mean to come across as a “that guy”. So completely agree with you. The laminar flow Reddit shit infuriated me because I have my masters in Mech Eng and used to do a lot of CFD. People were talking out of their ass on “I know laminar flow!”

Well, see, it’s more than that. It’s not just a visual thing and…

“Ahhhh! I know laminar flow! Downvote the heretic!”

Sir… sir… SIR. I’ll have you know that I, too, have seen laminar flow in the stream from a faucet. I’ll not have my qualifications dismissed so haughtily.

Like what?

I was able to delete most of the engineering/science questions on Reddit I answered before they permabanned my account. I didn’t want my stuff used for their bullshit. Fuck Reddit.

I don’t mind answering another human and have other people read it, but training AI just seemed like a step too far.

Hey, buddy, some of us are smartarses, not idiots!

I am simultaneously a smartass and a dumbass.

I’m both

Hey! Speak for yourself!

I for one am totally an idiot!

Wat?

Which part was unclear?

Sync didn’t like the link.

… as one does?

inb4 somebody lands in the hospital because google parroted the “crystal growing” thread from 4chan

Was it “mix bleach and ammonia” ?

Edit: just to be sure, random reader, do NOT do this. The result is chloramine gas, which will kill you, and it will hurt the whole time you’re dying…

Not recommending people do it, but I survived just fine.

are you sure about that

Not enough neurons to cause trouble.

Not anymore

Anyways

Possibly true, however, I used a rather simple trick I call the Bill Clinton method.

I didn’t inhale.

My mom accidentally mixed two cleaners once and developed chemical pneumonia for a month. I was too young to realize how close she was to not making it…

I can’t wait for it to recommend drinking bleach to cure covid.

Not to mention all the orifices you can stuff tide pods all up in.

Hey Google, how do I use these tide pods?

Well, you can use them to do your laundry, all you need to do is toss one in the wash.

You could also use them to impress your friends by shoving one up your butt.

For extra points you could swallow one as well.

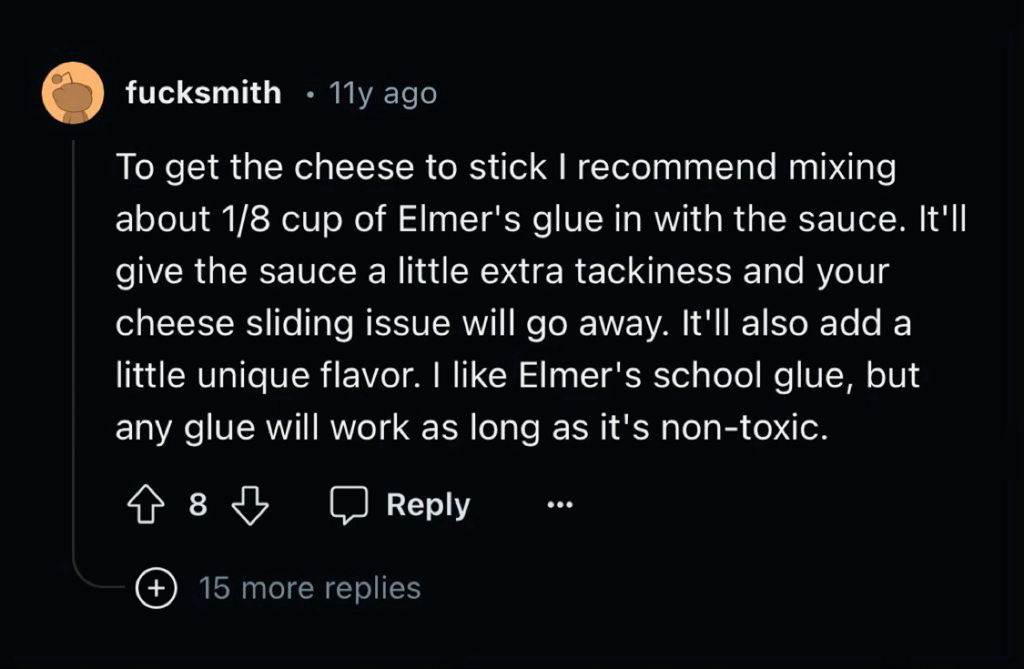

Nice find! Out of curioustity, how did you go about looking for the source? Searched for the more unique words?

If you google it right now, it’s the second real result. But that might be because of all the articles google-bombing it.

Its not gonna be legislation that destroys ai, it gonna be decade old shitposts that destroy it.

Well now I’m glad I didn’t delete my old shitposts

…yet

Posts there are expired and deleted over time, so unless someone’s made an effort to archive them, they’re gone.

Of course, the AI people could hoover up new horrible posts.

I would be surprised if someone hasn’t been scraping it for years.

**Moe.archive and 4chan archive have entered the chat. **

Yea there are multiple 4chan archives…

Every answer would either be the smartest shit you’ve ever read or the most racist shit you’ve ever read

Everyone who neglected to add the “/s” has become an unwitting data poisoner

Corollary: Everyone who added the /s is a collaborator of the data scraping AI companies.

We should all strive to become reddit fucksmiths

Elmers isnt exactly preschool paste

TBH I’m curious what the difference between this and “hallucinating” would be.

I think ‘hallucinating’ means when it makes up the source/idea by (effectively) word association that generates the concept, rather than here it’s repeating a real source.

Couldn’t that describe 95% of what LLMs?

It is a really good auto complete at the end of the day, just some times the auto complete gets it wrong

Yes, nicely put! I suppose ‘hallucinating’ is a description of when, to the reader, it appears to state a fact but that fact doesn’t at all represent any fact from the training data.

Well it’s referencing something so the problem is the data set not an inherent flaw in the AI

i’m pretty sure that referencing this indicates an inherent flaw in the AI

No it represents an inherent flaw in the people developing the AI.

That’s a totally different thing. Concept is not flawed the people implementing the concept are.

yeah thanks

“Of course, this flexibility that allows for anything good and popular to be part of a natural, inevitable precursor to the true metaverse, simultaneously provides the flexibility to dismiss any failing as a failure of that pure vision, rather than a failure of the underlying ideas themselves. The metaverse cannot fail, you can only fail to make the metaverse.”

– Dan Olson, The Future is a Dead Mall

The inherent flaw is that the dataset needs to be both extremely large and vetted for quality with an extremely high level of accuracy. That can’t realistically exist, and any technology that relies on something that can’t exist is by definition flawed.